:no_upscale())

Manual accessibility tests: Why you need more than automated testing

Przeczytaj historię When did you last test an app—and miss where users struggle?

When did you last test an app—and miss where users struggle?Here are some typical examples: a “Continue” button that looks clean in the design, but is so small that users repeatedly miss it. Or a contrast that works in the design file, but becomes barely readable under real-world conditions. Or a video without captions that isn’t accessible in certain situations.

These aren’t edge cases. They are design and development decisions that determine whether an app works—or doesn’t.

The difference: for many, these are moments of frustration. For people with visual impairments, motor limitations or hearing loss, they are not exceptions—they are everyday experience.

This is where the real leverage lies: accessibility should not be treated as an afterthought, but as an integral part of design, development and testing. In the next section, you’ll see how to identify common barriers early in your own testing—and how to avoid them systematically.

Many fundamental accessibility issues can already be identified within your existing workflow—without additional tools. These three checks help you spot common problems early:

Increase your smartphone’s system font size to “Large”. Does the app adapt properly—or does the layout break?

If content is cut off, overlaps, or becomes unreadable, this is a clear sign that flexible layouts haven’t been properly considered—particularly problematic for users who rely on larger text.

A contrast that looks fine in a design tool can quickly fall short in real-world use.

Test key UI elements under realistic conditions—for example in bright ambient light or for users with reduced vision. If content becomes difficult to read, this isn’t a design preference—it’s an accessibility issue.

Are interactive elements large enough and sufficiently spaced?

Small or closely spaced buttons often lead to mis-taps—especially during one-handed use, with hand tremors, or reduced fine motor control. Adequate touch target sizes are not an edge-case optimisation, but a fundamental UX requirement.

One of the fastest ways to uncover accessibility issues is to shift perspective in your testing—by navigating without visual cues.

Screen readers make this possible. They present content in a structured way and reveal how well semantics, labelling and navigation logic have actually been implemented. At the same time, they show whether your app remains usable without visual orientation. For people with visual impairments, this is the foundation of everyday use.

iOS: VoiceOver (Settings → Accessibility)

Android: TalkBack (Settings → Accessibility)

How to approach this in testing:

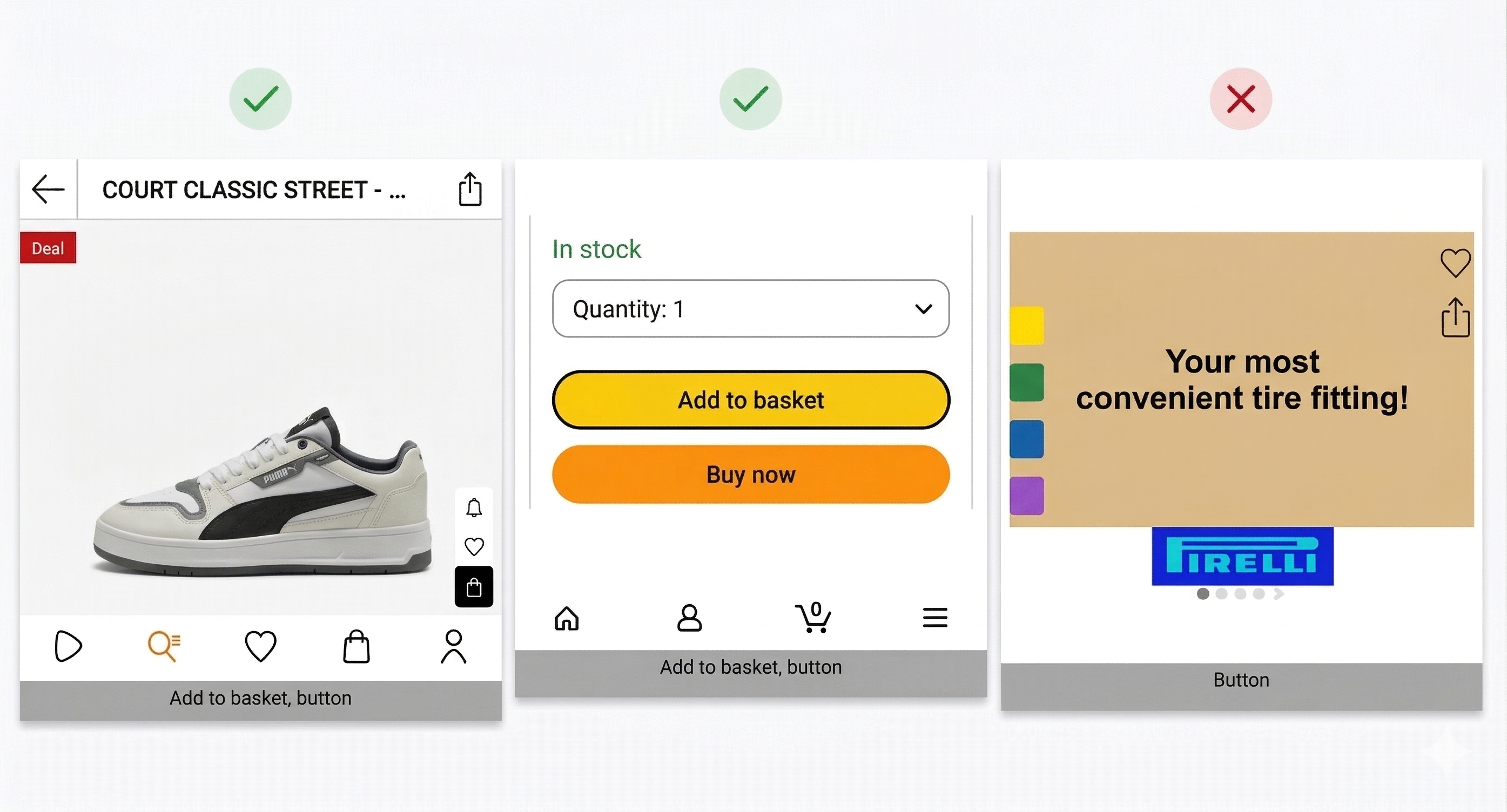

Enable the screen reader and navigate step by step through the app by swiping left to right with one finger. Elements are focused and read out in sequence; actions are triggered with a double tap.

Try to complete a typical task from a user perspective—for example adding a product to the basket or filling out a form.

Pay attention to the semantic output:

If an element is only announced as “button” or “image”, the semantic information is missing—users don’t know what action it performs.

If, on the other hand, the output clearly communicates what will happen—such as “Add to basket” or “Product image: blue T-shirt, size M”—the app is on the right track.

This test gives you a realistic sense of how accessible an app actually is. At the same time, it quickly becomes clear: many issues are subtle—and difficult to identify reliably without systematic testing and real user perspectives.

This test gives you a realistic sense of how accessible an app actually is. At the same time, it quickly becomes clear: many issues are subtle—and difficult to identify reliably without systematic testing and real user perspectives.

Many of these issues remain unnoticed even when a screen reader is enabled. They are often caused by missing semantics, unclear states or incorrect focus handling—and are difficult to detect without structured testing and real-world usage perspectives.

The following examples come from real applications and occur far more frequently in practice than you might expect.

In a shopping app, filter options for colour or size may be visually present—but not accessible to the screen reader. The elements are not focusable or missing from the accessibility tree. For users, this functionality effectively does not exist.

A dropdown menu opens—but the screen reader does not announce the change of state. A checkbox is selected, but no feedback is given. Without correctly defined states (e.g. “expanded”, “checked”), users lack essential orientation.

“Item added to basket” appears briefly on screen and disappears again. Without a live region or announcement, this feedback is not conveyed to screen reader users.

An error message appears and blocks the interface—but cannot be dismissed or exited. The screen reader may announce it, but offers no clear focus or path back into the flow.

The screen reader moves into areas that are not visually active—such as a collapsed calendar. Users navigate through content that is not currently usable. Without consistent focus management, this leads to disorientation.

A product image is announced simply as “image”. Without alternative text, key information such as colour, form or context is missing—the element loses its meaning.

A field is announced as “text field” without any label or context. Users don’t know what information is expected. Without properly associated labels, forms become unusable.

The three checks in this article can be easily integrated into your existing workflow—without additional tools or significant effort. They help you surface fundamental accessibility issues early and identify common UX problems more quickly.

At the same time, real-world experience shows that accessibility goes much deeper. Many of the issues described above stem from missing semantics, unclear states or incorrect focus handling—and cannot be fully identified without systematic testing and real user perspectives.

This is where Eye-Able comes in: we combine technical testing with the perspective of people who use apps daily with screen readers and other assistive technologies—uncovering barriers that often remain hidden in traditional QA processes.

Together with our partner Abra, we combine automated checks with manual testing by our expert team—providing a comprehensive view of your app’s accessibility, directly within your development and QA processes.

Automated testing identifies typical technical issues such as incorrect text scaling, insufficient contrast or missing labels. Manual reviews and testing with real users reveal how these issues impact actual usage—precisely where purely tool-based analysis reaches its limits.

Digital accessibility decides whether customers can shop at all. Check your website’s accessibility now – and reduce legal risk before it becomes a problem.

:no_upscale())

Manual accessibility tests: Why you need more than automated testing

Przeczytaj historię:no_upscale():format(png))